Most welding lines still rely on manual inspection or post-process testing. Inspectors visually check weld beads, look for defects, and make decisions based on their experience. This works for small batches, but once production scales, problems start appearing.

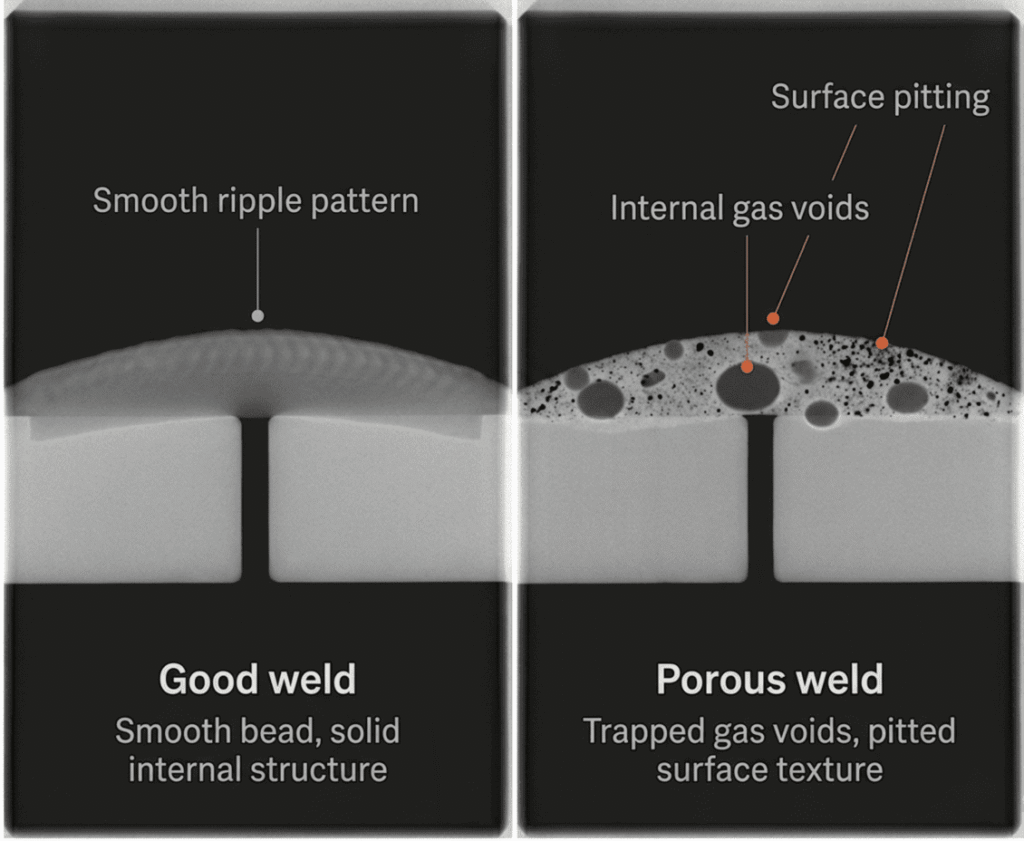

Porosity is one of the most common and difficult-to-detect welding defects. Porosity-related rejections account for an estimated 20% to 40% of all weld repair work in heavy industry. The exact value depends on the process and sector.

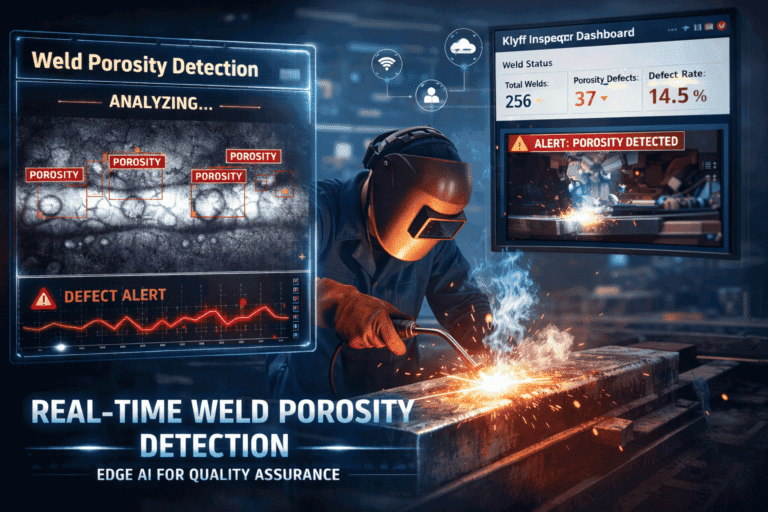

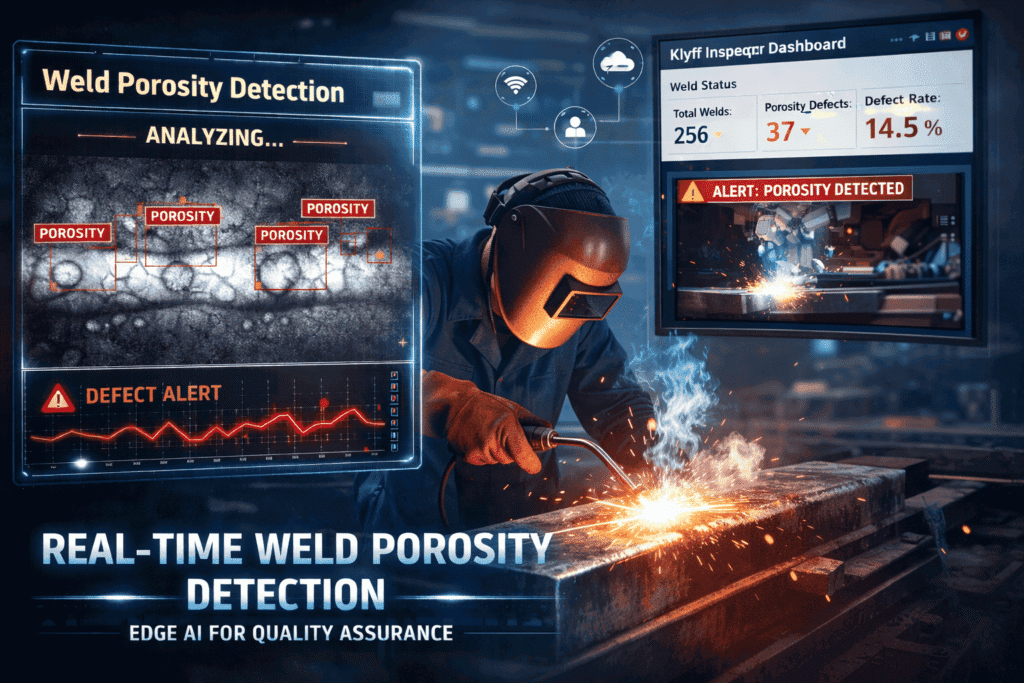

In this blog, we build a real-time Weld Porosity Detection Edge AI system that runs entirely at the edge. This enables detection during the welding process itself. Klyff Inspeqtr detects porosity and generates instant alerts. It enables immediate corrective action.

By shifting detection from reactive (after welding) to proactive (during welding), this approach significantly reduces rework. It improves weld quality and moves manufacturing closer to a zero-defect production environment.

Why Edge AI for Weld Porosity Detection

Edge AI does not simply automate existing human processes. It changes the fundamental architecture of weld quality assurance in several important ways.

Post-weld to in-process:

Edge AI systems analyze real-time video of the welding process. This enables the detection of porosity while the weld bead is being formed. It replaces traditional post-process inspection with in-process detection.

Rework reduction:

Rework of a rejected weld costs 5 to 10 times more than the original welding process. This includes re-inspection, repair welding, heat treatment, and scheduling disruption. In high-volume production, even a 1% reduction in rework can lead to significant annual cost savings.

Continuous full-coverage inspection:

Traditional inspection methods are often limited to sampling due to time and cost constraints. Edge AI enables continuous inspection of every weld. This ensures full coverage without increasing per-unit inspection cost.

Latency reduction: By performing inference directly at the edge, the system eliminates dependency on cloud processing.

What Intel Edge AI Enables?

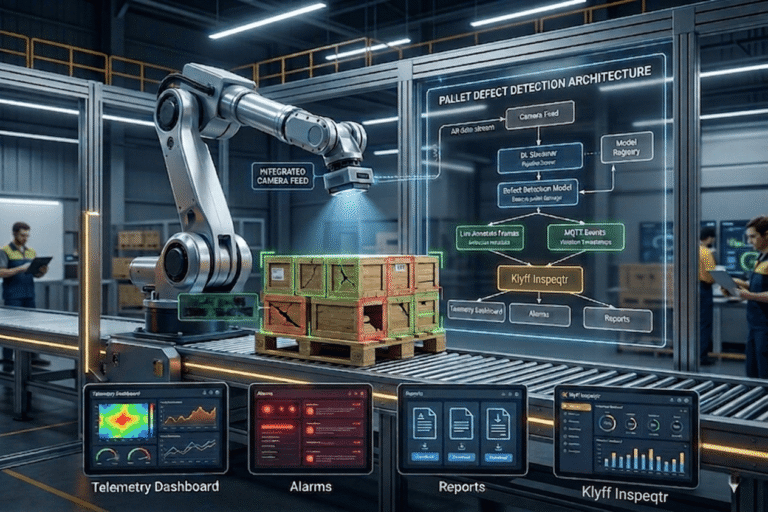

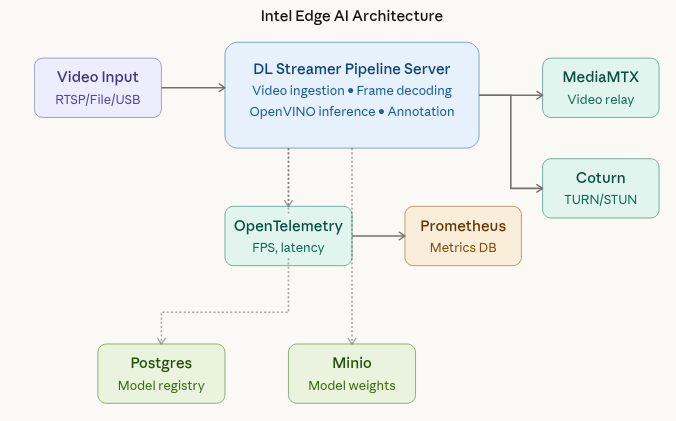

The Edge AI Suite architects the Weld Porosity Detection application as a distributed, microservice-driven system tailored for industrial edge environments. At its core, the DL Streamer Pipeline Server orchestrates the ingestion of welding video streams and executes optimized deep learning inference pipelines for real-time classification of porosity defects. MediaMTX is responsible for efficient video transport and streaming, enabling low-latency visualization of inference results through WebRTC.

For observability, OpenTelemetry Collector and Prometheus gather metrics, allowing operators to monitor system performance, pipeline health, and inference throughput. Postgres and MinIO handle persistent storage and model lifecycle management, maintaining model artifacts, configuration parameters, and associated metadata.

Collectively, this architecture provides a highly scalable, modular, and extensible framework capable of supporting both live and recorded video inputs, while ensuring continuous, low-latency weld inspection without necessitating changes to the underlying system design.

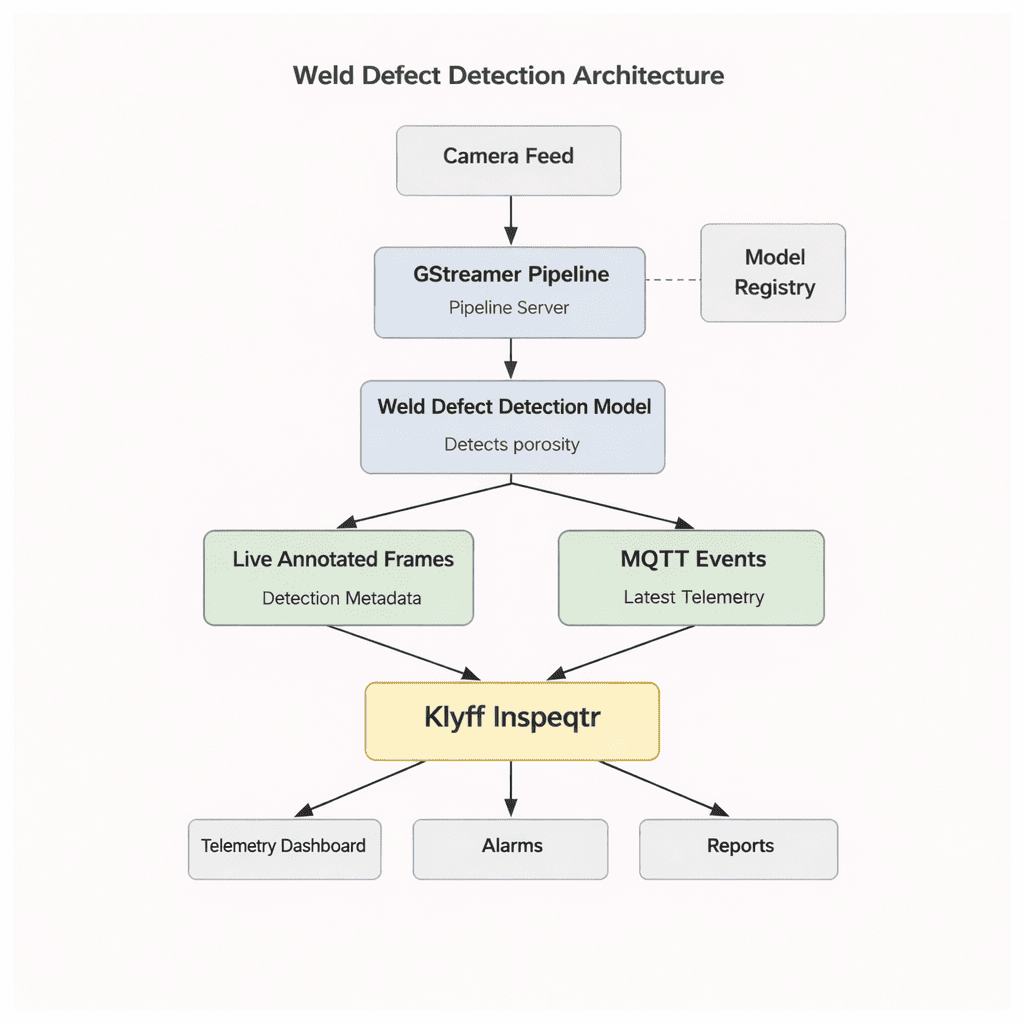

From Camera to Decision

The pipeline supports multiple input sources, including files, RTSP streams, and webcams, enabling both testing and real-time deployment. File inputs allow replaying specific scenarios during development, while RTSP and webcam sources support live industrial camera feeds. This flexibility allows seamless switching between sources without requiring any changes to the inference pipeline configuration.

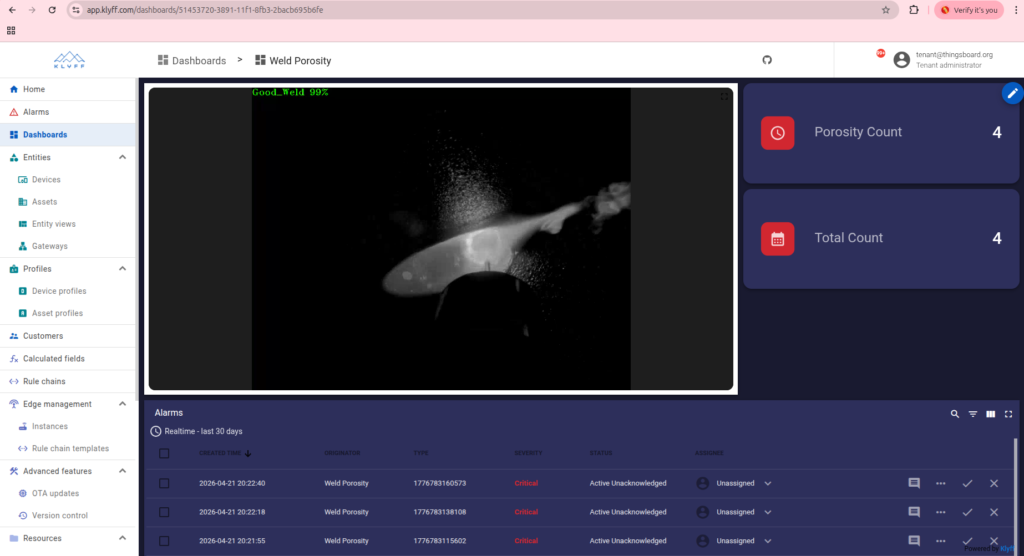

Once the pipeline is running, the DL Streamer Pipeline Server processes every frame, performs AI inference, and overlays the output with annotations highlighting detected porosity. The Klyff dashboard receives this information and enables users to visualize the results in real time through dashboards, alerts, and repo

Model Development

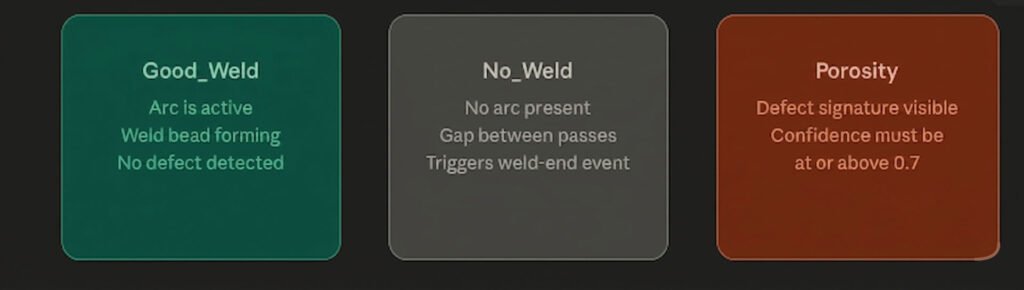

The Weld Defect Detection system builds on a carefully trained AI model that enables accurate real-time inference at the edge. The process starts with data collection, in which the system captures welding videos and images under varying conditions, materials, lighting, and defect scenarios. It then annotates the dataset with labels such as Good_Weld, No_Weld, and Porosity to ensure high-quality ground truth.

The system preprocesses the data and splits it into training, validation, and test sets. During training, the model learns to detect key visual patterns like irregular weld pools, texture inconsistencies, and gas-related artifacts that indicate porosity. It optimizes hyperparameters such as learning rate, batch size, and optimizer, while using data augmentation techniques like rotation, brightness changes, and noise injection to improve generalization.

After training, the model is evaluated using metrics like accuracy, precision, recall, and F1-score to ensure reliable performance across different scenarios.

OpenVINO Model Optimization & Deployment

OpenVINO offers a performance-tuned inference engine specifically designed for Intel hardware in edge AI scenarios. It takes models trained in popular frameworks like PyTorch, TensorFlow, or ONNX and converts them into an optimized Intermediate Representation (IR). This IR consists of two files: .xml for the model structure and .bin for the weights enabling highly efficient and fast execution on Intel devices.

Export and Convert a YOLO Model

# Step 1: Export YOLOv8 to ONNX

from ultralytics import YOLO

model = YOLO('yolov8n.pt')

model.export(format='openvino', imgsz=640)

# Ultralytics handles OVC conversion automatically!

INT8 Quantization

OpenVINO’s Post-Training Quantization (PTQ) reduces model precision from 32-bit floating point to 8-bit integers, which brings several practical benefits:

- Smaller Model Size: Lower precision reduces memory usage, making the model quicker to load and easier to store.

- Improved Performance: Inference becomes faster, especially when running on CPUs in modern Intel hardware.

- Negligible Accuracy Impact: With proper calibration using a representative dataset (around 100–500 images), the drop in accuracy is usually less than 1%.

To learn more about how to apply quantization and evaluate its effects, refer to the guide on Quantization in Edge AI.

IoT Platform Integration: From Inference to Action

AI predictions are only effective when real systems can act on them. The system uses MQTT to send detection results from the weld porosity detection setup to IoT platforms like Klyff Inspeqtr. These platforms transform raw model outputs into clear, structured events that users can monitor and use for decision-making.

Integration of Klyff Inspeqtr

A weld porosity detection system needs to do more than just find defects. It needs alerts, dashboards, tracking of past events, live monitoring, and quick action from operators. To do this, a custom MQTT extension is used to change the format of incoming detection data so that it works with platforms like Inspeqtr.

The extension reads the inference metadata, identifies defects, and converts them into structured telemetry, device attributes, and alert events linked to the specific inspection unit. It assigns each detection a timestamp and additional contextual information so that the data can be further processed and analyzed.

This setup turns raw AI detection outputs into a reliable, real-time monitoring and alerting system that works well for quality inspection processes in factories.

Edge AI Weld Porosity Detection Workflow

The system performs real-time weld porosity detection using a radiography (X-ray) imaging system, enabling visualization of internal gas voids within the weld. These voids appear as density variations in radiographic images and are analyzed by the model for accurate defect detection. While welding, these density variations are visible in real time.

The classification

The Model watches the ongoing welding area in real time and outputs exactly one of three labels per frame. These three states are the entire vocabulary that the detection system works with; every decision is built on this simple signal.

How a weld cycle is tracked over time

At 30 FPS, the model generates many frame-level predictions, but the system does not treat each frame as a separate defect.

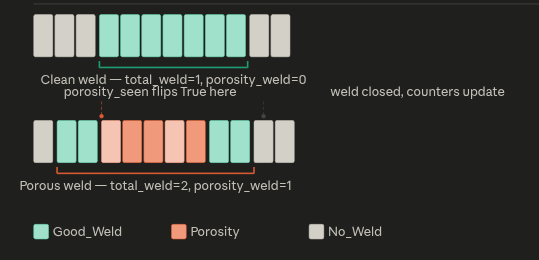

Instead, it tracks a weld cycle with three states: start, active, and end.

The cycle begins when the first Good_Weld frame appears after idle, marking weld_active = True. During this active phase, multiple Porosity frames may occur, but the system only records the first occurrence by setting porosity_seen = True and ignores duplicates.

When a No_Weld frame arrives, the cycle ends. At this point, one weld is counted: total_weld += 1, and if porosity was seen, porosity_weld += 1. A single porosity_event = 1 signal is then sent for alerting.

Finally, the system resets to idle and waits for the next weld cycle.

How the frame timeline actually looks

Below is an example of the raw label sequence from a camera looks like for two consecutive weld passes, one clean, one porous. Each block is a frame. The system processes them left to right in real time.

Notice that even though multiple Porosity frames appear inside the second cycle, the counter only increments once when the weld closes. The flag-based approach converts a flood of per-frame signals into a single, meaningful per-weld verdict.

What Klyff Inspeqtr receives

Every single frame produces one MQTT publish to Klyff Inspeqtr. The payload carries both the raw per-frame classification result and the rolling cumulative quality statistics, so the dashboard is always up to date without any aggregation lag.

You can find the complete source code on GitHub: https://github.com/KlyffHanger/Inspeqtr-WeldPorosity

Conclusion

Weld porosity detection is a critical use case in manufacturing and fabrication industries, where ensuring weld integrity and preventing internal defects are essential for structural safety and long-term reliability. The Intel Open Edge platform, combined with Klyff Inspeqtr, as demonstrated by the Weld Porosity Detection sample, shows how you can move weld quality inspection to the edge efficiently while maintaining strong observability and flexibility. This approach enables a system that ingests video from radiographic or industrial inspection sources, detects porosity defects in real time, streams annotated results to a browser, and adapts to changing welding conditions—delivering reliable and scalable inspection for industrial environments.

Get in touch with us to help you with your Automated Quality Inspection scenarios. We are just a message away.